Preface

About This Book

This book is about a fundamental shift in what software engineers actually do.

For most of the history of the profession, the primary bottleneck in software development was writing code: turning a clear understanding of the problem into a working implementation. Tools, languages, and frameworks were all designed to help engineers write code faster, more reliably, and with fewer defects. Being a great engineer meant, in large part, being a great coder.

That bottleneck is moving — fast.

AI agents can now write syntactically correct, contextually relevant code from a natural language description. They can scaffold entire systems, generate test suites, refactor legacy code, and explain unfamiliar codebases in seconds. The implementation layer — once the core of the engineer’s craft — is increasingly automated.

What remains irreducibly human is everything that surrounds implementation: understanding the problem deeply, specifying intent precisely, verifying that what was produced is actually correct, and refining until it is truly right.

This is the new loop of software engineering in the agentic era:

SPECIFY → GENERATE → VERIFY → REFINE

Specify — Define the problem with precision. Decompose ambiguous requirements into clear, agent-sized tasks. Write specifications that leave no room for misinterpretation.

Generate — Delegate to AI agents with confidence. Provide the right context, constraints, and success criteria. Let agents handle the implementation.

Verify — Review outputs critically and systematically. Test assumptions. Catch hallucinations, edge cases, and silent failures before they reach production.

Refine — Iterate. Improve your specifications, your prompts, your verification strategies. Each cycle makes the next one faster and more accurate.

This loop replaces the old SDLC — not by discarding its principles, but by redistributing where human intelligence is most needed. The engineer moves up the abstraction stack: from implementer to architect, from coder to critic, from builder to director.

This book teaches that move. It is not a book about which AI tools to use or how to write clever prompts. It is a book about the new skills that matter when coding is automated: problem decomposition, system thinking, critical verification, and judgment under uncertainty. Skills that compound. Skills that do not expire when the next model is released.

Who This Book Is For

Primary readers:

- Software engineers transitioning from traditional to AI-assisted workflows who want sustainable, tool-independent skills

- Advanced undergraduate and graduate students in software engineering

- Senior developers and tech leads adapting team practices

Secondary readers:

- Engineering managers redefining development processes

- Researchers in software engineering

What you need to bring:

- Comfort with at least one programming language (examples are in Python)

- Familiarity with basic programming concepts: functions, classes, loops, conditionals

- Some exposure to version control (git) and the command line

What you do not need:

- Prior experience with AI coding tools

- A background in machine learning or deep learning

- Advanced knowledge of Python — the examples use standard library features and widely-adopted packages

How to Use This Book

This book is written for a 12-week university course at Monash University, but it is structured so that it can be used in several ways.

Path A: 12-Week Course (Recommended)

Follow the chapters in order, one per week. Each chapter builds on the previous and contributes one milestone to the running course project — a Task Management API that grows from a scope statement (Week 1) to a complete AI-native system (Week 12).

Weeks 1–4: SE Foundations (Chapters 1–4)

Weeks 5–8: AI-Native Practice (Chapters 5–8)

Weeks 9–12: Security, Ethics, Productivity, Future (Chapters 9–12)

The project milestones at the end of each chapter are the primary assessment vehicle. Submit them on a weekly cadence and use peer review to compare approaches.

Path B: Practitioner Self-Study

If you are an experienced engineer who wants to develop AI-native skills specifically, start with Chapter 5 (The AI-Native Development Paradigm) to calibrate where you are, then read Chapters 6–8 in order. Use Chapters 1–4 as reference when the foundations feel shaky, and Chapters 9–12 for the governance and strategy dimensions.

Recommended reading order: 5 → 6 → 7 → 8 → 9 → 10 → 1–4 (reference) → 11 → 12

Path C: Team Reference

If your team is adopting AI tools and you want to use this as a shared reference, the most immediately useful chapters are:

| Need | Chapter |

|---|---|

| Writing better AI specifications | 6 |

| Evaluating AI-generated code | 7 |

| Setting up agents for development tasks | 8 |

| Security review of AI-generated code | 9 |

| AI use policies and ethics | 10 |

| Measuring team productivity | 11 |

Disclaimers

All code examples in this book use Python. This choice is deliberate and transparent, not an endorsement.

This is not a sponsored book. No commercial relationship exists between the author or any other AI provider mentioned.

This book does not represent the views of Monash University. It is written in a personal capacity and is not endorsed by, affiliated with, or produced on behalf of Monash University or any other institution. Readers are responsible for applying the concepts and techniques described here thoughtfully and at their own discretion. The author accepts no liability for decisions or outcomes arising from the use of this material.

Contributions and Feedback

This book is a living document. Errors, outdated examples, and gaps in explanation are inevitable — and fixable.

If you spot a mistake, have a suggestion, or want to contribute an example, case study, or exercise, you are warmly welcome to do so. The source is open and maintained at github.com/awsm-research/agentic-swe-book.

- Report issues — open a GitHub issue with the chapter and page reference

- Suggest improvements — submit a pull request with a clear description of the change and why it helps readers

- Share your project — if you build something interesting using the techniques in this book, open a discussion thread; the best examples may be featured in future editions

All contributions are credited. No contribution is too small.

Associate Professor Kla Tantithamthavorn, Monash University, Australis 2026

About the Author

A/Prof Kla Tantithamthavorn

Associate Professor in Software Engineering

Faculty of Information Technology, Monash University, Australia

Kla Tantithamthavorn is one of the most productive and impactful software engineering researchers of his generation. He holds the position of Associate Professor in the Faculty of Information Technology at Monash University, where he leads research at the intersection of artificial intelligence and software engineering — a field he has helped define.

His work has been cited over 8,600 times (Google Scholar), with an h-index of 44 and 78 publications each cited ten or more times. He has published more than 80 peer-reviewed articles in the most selective venues in his field, including 12 papers in IEEE Transactions on Software Engineering (TSE), 12 papers at the International Conference on Software Engineering (ICSE), and 8 papers in ACM Transactions on Software Engineering and Methodology (TOSEM) — an output that places him among the top researchers worldwide in empirical software engineering.

Research

Kla’s research programme spans three interconnected themes:

AI-Enabled Software Engineering — developing automated techniques for defect prediction, code review automation, and agile planning that help development teams ship higher-quality software faster. His tools are used by practitioners internationally; AIBugHunter, his Visual Studio Code extension for automated vulnerability detection, has been downloaded over 1,000 times.

Explainable AI for Software Engineering (XAI4SE) — a field he helped pioneer, concerned with making AI-driven software quality predictions interpretable and actionable for developers and managers. His open textbook on XAI4SE has attracted over 20,000 pageviews from 4,300 users across 83 countries.

LLM-Based Software Safety and Security (LLMSecOps) — an emerging programme investigating how large language models can be used to find, explain, and fix security vulnerabilities in software systems, and how the vulnerabilities introduced by LLM-generated code can be systematically detected.

Recognition

- World Top 2% Scientist — Stanford University global ranking

- Most Impactful Early Career Researcher in software engineering, 2013–2020

- IEEE Senior Member

- ARC DECRA Fellow (Australian Research Council Discovery Early Career Researcher Award, 2020–2023)

- JSPS Research Fellowship for Young Scientists — Japan Society for the Promotion of Science

- 2024 Dean’s Award for Excellence in Research Engagement and Impact, Monash University

- ACM SIGSOFT Distinguished Paper Award, ASE 2021

- SANER 2025 Most Influential Paper Award

- NAIST Best PhD Student Award

- Finalist, 2024 Defence and National Security Workforce Awards

Funding

Kla has secured over $2 million in competitive research funding, including:

- CSIRO Next Generation Graduate AI Program — $1.2M (2023–2027), supporting PhD scholarships and industry-partnered AI research

- ARC DECRA — $600K (2020–2023), supporting foundational research in explainable AI for software engineering

Mentorship and Teaching

Kla has supervised 13 PhD students (10 as primary supervisor, 3 as co-supervisor), with 6 successfully graduated and placed in academic and industry roles. He brings the same rigour he applies to research to his teaching: he pioneered the use of EdStem’s Unit Testing Challenges for active learning, designed the 2026 Bachelor of Software Engineering curriculum aligned with SWEBOK 2024, and has consistently improved teaching evaluations — from 4.14 in 2023 to 4.57 in 2024.

This book grew from his undergraduate and postgraduate teaching at Monash University, where he has developed and taught courses on software engineering, AI-native development, and automated software quality.

Service

Kla serves the software engineering research community as:

- Associate Editor, IEEE Transactions on Software Engineering

- Guest Editor, IEEE Software (MLOps and Explainable AI for SE special issues)

- Junior PC Co-Chair, Mining Software Repositories (MSR) 2023 and 2025

- Keynote Speaker at ICSE 2023, ASE 2021, and multiple industry partner events

Selected Recent Publications

- Tantithamthavorn et al. (2026). Pitfalls in language models for code intelligence: A taxonomy and survey. ACM TOSEM.

- Tantithamthavorn et al. (2025). Enhancing large language models for text-to-testcase generation. Journal of Systems and Software.

- Tantithamthavorn et al. (2025). RAGVA: Engineering retrieval augmented generation-based virtual assistants in practice. Journal of Systems and Software.

- Tantithamthavorn et al. (2025). Code readability in the age of large language models: An industrial case study from Atlassian. ICSME 2025.

For a complete publication list, see Google Scholar.

Connect: chakkrit.com

Chapter 1: Software Engineering Fundamentals and Processes

“Software engineering is the establishment of and use of sound engineering principles in order to obtain economically software that is reliable and works efficiently on real machines.” — Friedrich Bauer, 1968 NATO Conference

Learning Objectives

By the end of this chapter, you will be able to:

- Describe the historical evolution of software engineering from its origins to the present day.

- Explain the key software development lifecycle (SDLC) models: Waterfall, Agile, Scrum, and Kanban.

- Articulate how AI is reshaping each phase of the SDLC and what this means for the role of the software engineer.

1.1 What Is Software Engineering?

Software engineering is the disciplined application of engineering principles to the design, development, testing, and maintenance of software systems. Unlike informal programming, software engineering emphasises process, quality, collaboration, and long-term maintainability.

The term was deliberately chosen. In 1968, NATO convened a conference in Garmisch, Germany, to address what organisers called the “software crisis” — a widespread recognition that software projects were routinely over budget, delivered late, and unreliable (Naur & Randell, 1969). The goal of using the word engineering was aspirational: to bring to software the same rigour, predictability, and professionalism that civil or mechanical engineers brought to bridges and engines.

That aspiration has guided the field ever since — and it remains relevant today, even as the tools, languages, and collaborators (including AI systems) have changed dramatically.

Photograph from 1968 NATO Software Engineering Conference (University of Newcastle photo)

Photograph from 1968 NATO Software Engineering Conference (University of Newcastle photo)

Why Software Engineering Matters

Consider two scenarios:

- Scenario A: A solo developer writes a script to process CSV files for personal use. It works, mostly. When it breaks, they fix it themselves.

- Scenario B: A team of 50 engineers builds a financial trading platform used by millions of customers. Bugs can cause financial losses. Downtime can trigger regulatory penalties.

Software engineering is primarily concerned with Scenario B — or with preparing developers who will eventually work on systems of that scale and consequence. The principles covered in this book apply whether you are building a mobile app, a machine learning pipeline, or an AI-assisted development tool.

1.2 A Brief History of Software Engineering

Understanding where software engineering came from helps explain why its practices exist and why they are changing again now.

1.2.1 The Early Years (1940s–1960s)

The first programmers wrote machine code directly — sequences of binary instructions hand-crafted for specific hardware. Programming was considered a clerical task; the real intellectual work was thought to be mathematics and system design.

As software grew more complex through the 1950s, assembly languages and early high-level languages like FORTRAN (1957) and COBOL (1959) emerged. Programs grew from hundreds of lines to hundreds of thousands. Managing this complexity became a serious problem.

1.2.2 The Software Crisis and Structured Programming (1968–1980s)

The 1968 NATO conference crystallised the software crisis. Projects like the IBM OS/360 operating system — documented famously by Fred Brooks in The Mythical Man-Month (Brooks, 1975) — demonstrated that adding more programmers to a late project often made it later. Software complexity was not a resource problem; it was a conceptual one.

The response was structured programming, championed by Dijkstra, Hoare, and Wirth. Programs should be built from clear, hierarchical control structures — sequences, selections, and iterations — rather than the chaos of GOTO statements. This was the beginning of thinking about software as something that could be reasoned about formally.

1.2.3 Object-Oriented Programming and Software Patterns (1980s–1990s)

The 1980s and 1990s saw the rise of object-oriented programming (OOP) — a paradigm in which software is modelled as interacting objects with state and behaviour. Languages like C++, Smalltalk, and later Java brought OOP to mainstream development.

In 1994, the “Gang of Four” — Gamma, Helm, Johnson, and Vlissides — published Design Patterns: Elements of Reusable Object-Oriented Software (Gamma et al., 1994), cataloguing 23 reusable solutions to common software design problems. These patterns are covered in depth in Chapter 3.

1.2.4 The Internet Era and Agile Methods (1990s–2000s)

The World Wide Web transformed software from shrink-wrapped products shipped on disks to continuously evolving services. Release cycles had to shrink from years to weeks. Traditional plan-driven methods struggled to keep pace.

In 2001, seventeen software practitioners gathered in Snowbird, Utah, and published the Agile Manifesto — a short document that valued:

Individuals and interactions over processes and tools Working software over comprehensive documentation Customer collaboration over contract negotiation Responding to change over following a plan

Agile methods — including Scrum, Extreme Programming (XP), and Kanban — spread rapidly through the industry. They emphasised short iterations, continuous feedback, and adaptive planning rather than upfront specification.

1.2.5 DevOps and Continuous Delivery (2010s)

As agile teams delivered software faster, operations teams struggled to deploy and maintain it. The DevOps movement (Kim et al., 2016) broke down the wall between development and operations, promoting:

- Continuous integration (CI): merging code frequently, building and testing automatically

- Continuous delivery (CD): keeping software always in a deployable state

- Infrastructure as code: managing servers and environments through version-controlled scripts

This shift made the pipeline from code commit to production deployment a first-class engineering concern — covered in depth in Chapter 4.

1.2.6 The AI Era (2020s–Present)

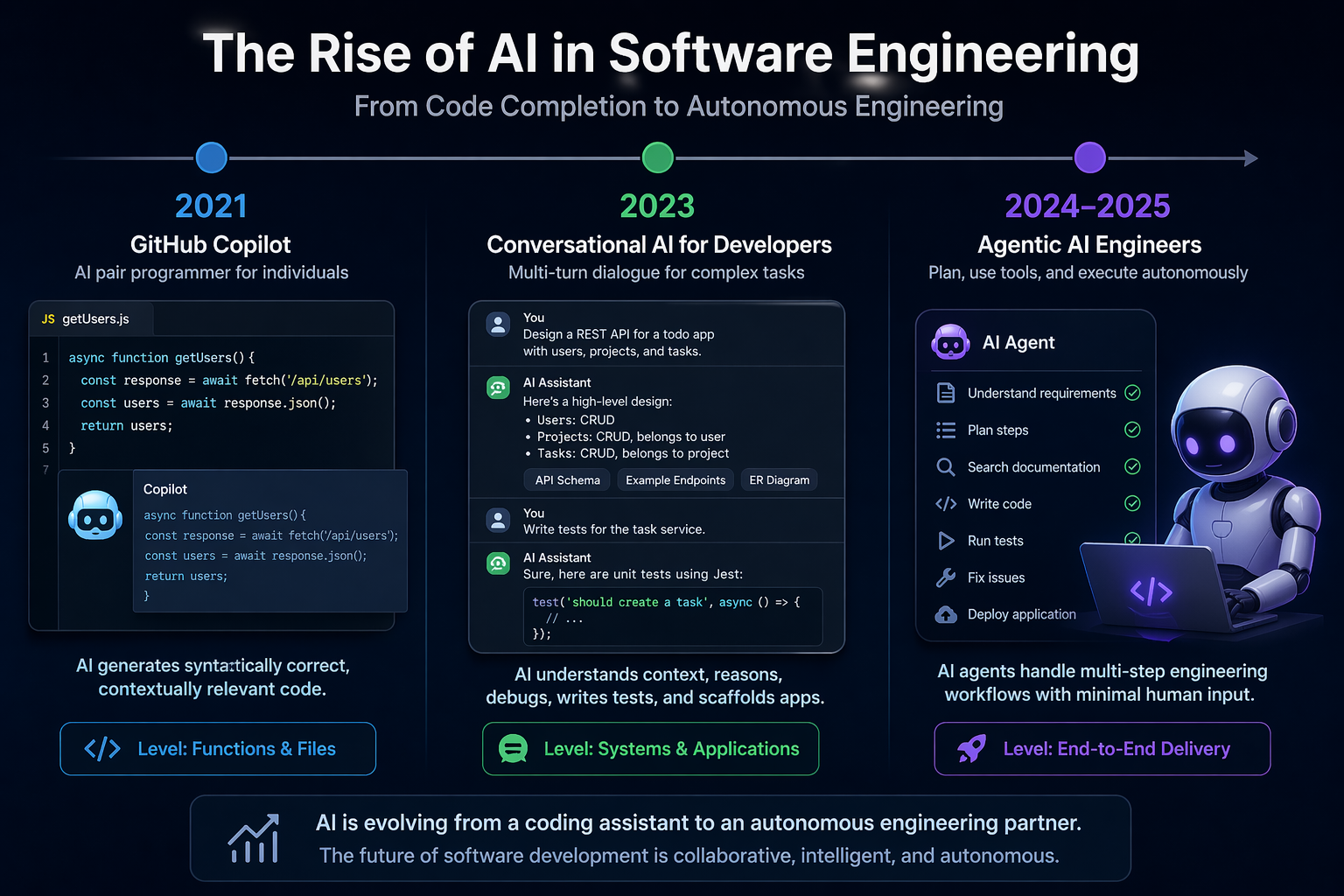

In 2021, GitHub released Copilot, powered by OpenAI Codex — a large language model trained on billions of lines of public code. For the first time, AI could generate syntactically correct, contextually relevant code at the level of individual functions and files.

By 2023, models like GPT-4 and Claude could engage in multi-turn conversations about software design, debug complex issues, write test suites, and generate entire application scaffolds from natural language descriptions.

By 2024–2025, AI coding agents, powered by agentic AI architecture, that can plan, use tools, and execute code autonomously - began to handle multi-step engineering tasks with minimal human intervention.

This is where this book begins.

From Copilot to autonomous agents: AI has evolved from completing code to planning, building, testing, and delivering software end to end. (Illustrated by AI)

From Copilot to autonomous agents: AI has evolved from completing code to planning, building, testing, and delivering software end to end. (Illustrated by AI)

1.3 The Software Development Lifecycle (SDLC)

The Software Development Lifecycle (SDLC) is a structured process for planning, creating, testing, and deploying software. While specific SDLC models differ in their details, most share a common set of phases:

| Phase | Key Activities |

|---|---|

| Requirements | Understand what the system should do |

| Design | Decide how the system will be structured |

| Implementation | Write the code |

| Testing | Verify the system works correctly |

| Deployment | Release the system to users |

| Maintenance | Fix bugs, add features, keep the system running |

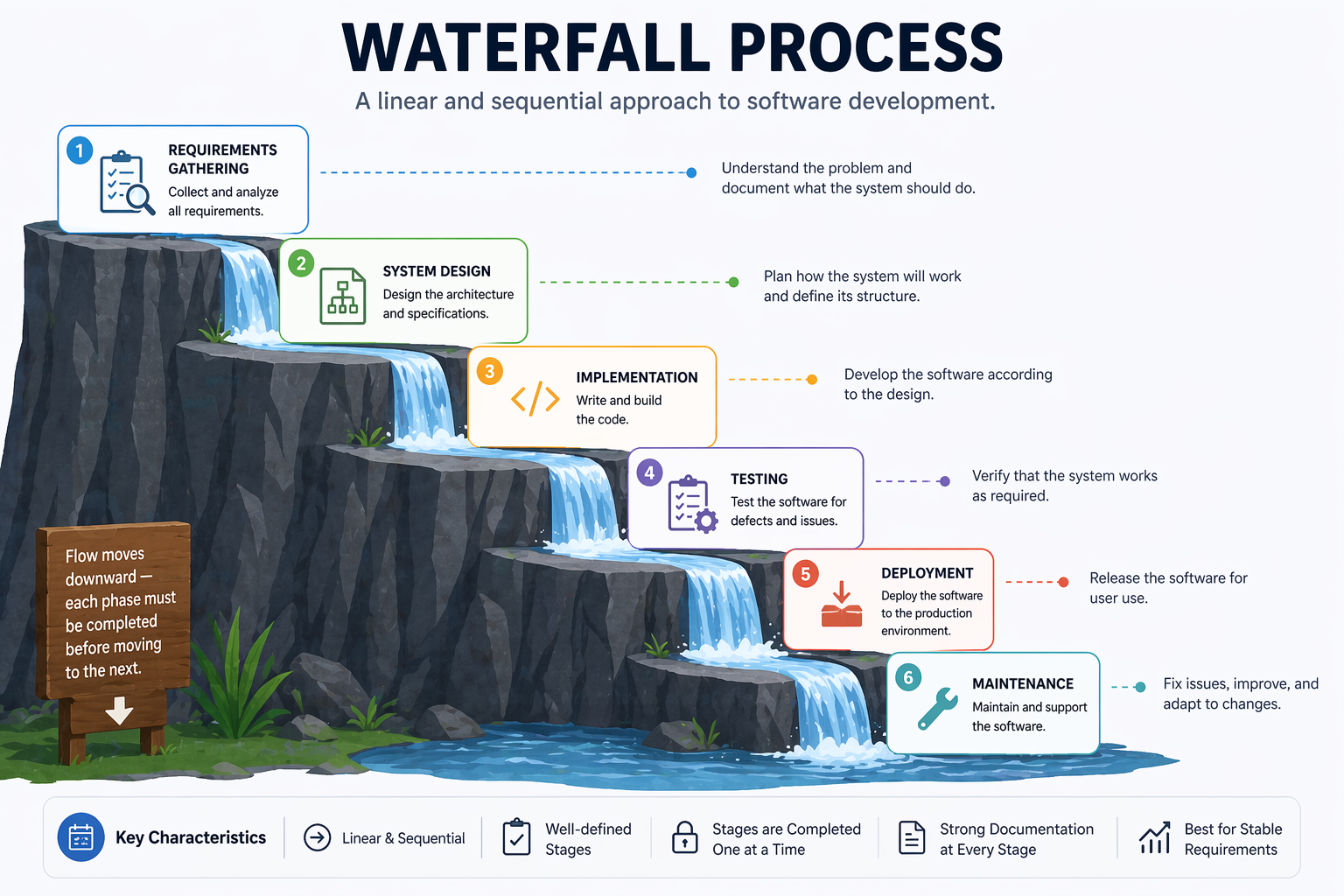

1.3.1 Waterfall

The Waterfall model, introduced by Winston Royce in 1970 (though Royce actually presented it as a flawed approach in the same paper), organises development as a strict sequence of phases (Royce, 1970):

Each phase must be completed before the next begins. The model assumes requirements can be fully and correctly specified at the start.

A Waterfall Software Development Process (Illustrated by AI)

A Waterfall Software Development Process (Illustrated by AI)

Strengths:

- Clear milestones and deliverables

- Easy to manage and document

- Works well for projects with stable, well-understood requirements (e.g., certain embedded systems, government contracts)

Weaknesses:

- Requirements almost never remain stable

- Errors discovered late are expensive to fix

- Users see no working software until the end

- Poor fit for projects with high uncertainty

1.3.2 Agile Software Development

Agile is not a single methodology but a family of approaches united by the values in the Agile Manifesto. The core insight is that software requirements and solutions evolve through collaboration, and that the ability to respond to change is more valuable than adherence to a plan.

Agile teams work in short cycles called iterations or sprints, typically 1–4 weeks long. Each iteration produces a working, tested increment of software. Stakeholders review the increment and provide feedback that informs the next iteration.

Key Agile principles include:

- Deliver working software frequently (weeks, not months)

- Welcome changing requirements, even late in development

- Business people and developers work together daily

- Simplicity — the art of maximising the amount of work not done — is essential

1.3.3 Scrum

Scrum is the most widely adopted Agile framework (Schwaber & Sutherland, 2020). It defines specific roles, events, and artefacts:

Roles:

- Product Owner: Represents stakeholders; owns and prioritises the product backlog

- Scrum Master: Facilitates the process; removes impediments; coaches the team

- Development Team: Self-organising group that delivers the increment

Events:

- Sprint: A time-boxed iteration of 1–4 weeks

- Sprint Planning: The team selects backlog items and plans the sprint

- Daily Scrum: A 15-minute daily standup to synchronise and identify blockers

- Sprint Review: The team demonstrates the increment to stakeholders

- Sprint Retrospective: The team reflects on the process and identifies improvements

Artefacts:

- Product Backlog: An ordered list of everything that might be needed in the product

- Sprint Backlog: The backlog items selected for the current sprint, plus the delivery plan

- Increment: The sum of all completed backlog items at the end of a sprint

┌─────────────────────────────────────────────────────────┐

│ Product Backlog │

│ (ordered list of features, bugs, improvements) │

└───────────────────────┬─────────────────────────────────┘

│ Sprint Planning

▼

┌─────────────────────────────────────────────────────────┐

│ Sprint (1–4 weeks) │

│ │

│ Sprint Backlog → Daily Scrum → Working Increment │

└───────────────────────┬─────────────────────────────────┘

│ Sprint Review + Retrospective

▼

Next Sprint...

1.3.4 Kanban

Kanban, adapted from Toyota’s manufacturing system by David Anderson (Anderson, 2010), is a flow-based method that focuses on visualising work, limiting work in progress (WIP), and continuously improving flow.

A Kanban board visualises work as cards moving through columns:

┌──────────┬──────────────┬──────────────┬──────────┐

│ Backlog │ In Progress │ In Review │ Done │

│ │ (WIP: 3) │ (WIP: 2) │ │

├──────────┼──────────────┼──────────────┼──────────┤

│ Task E │ Task B │ Task A │ Task D │

│ Task F │ Task C │ │ │

│ Task G │ │ │ │

└──────────┴──────────────┴──────────────┴──────────┘

Key Kanban practices:

- Visualise the workflow: Make all work and its status visible

- Limit WIP: Prevent overloading; finish before starting more

- Manage flow: Track cycle time and throughput; identify bottlenecks

- Improve collaboratively: Use data to drive continuous improvement

Kanban suits teams with highly variable incoming work (e.g., support and maintenance teams) or those who want a lighter-weight alternative to Scrum’s ceremonies.

1.4 Tutorial: Setting Up Your Python Development Environment

This tutorial walks through setting up a Python development environment.

Prerequisites

- Python 3.11 or later (python.org)

- Git (git-scm.com)

- VS Code (code.visualstudio.com)

- A GitHub account (github.com)

Step 1: Create a Virtual Environment

mkdir my_project

cd my_project

# Create and activate a virtual environment

python -m venv venv

source venv/bin/activate # macOS/Linux

# venv\Scripts\activate # Windows

python --version # Confirm activation

Step 2: Initialise a Git Repository

git init

cat > .gitignore << 'EOF'

venv/

__pycache__/

*.pyc

.env

EOF

git add .gitignore

git commit -m "Initial commit: add .gitignore"

Step 3: Install Core Development Tools

pip install pytest ruff mypy pre-commit

pip freeze > requirements.txt

Step 4: Configure Ruff and Mypy

# pyproject.toml

[tool.ruff]

line-length = 88

target-version = "py311"

[tool.ruff.lint]

select = ["E", "F", "I", "N", "W"]

[tool.mypy]

python_version = "3.11"

strict = true

Step 5: Set Up Pre-commit Hooks

# .pre-commit-config.yaml

repos:

- repo: https://github.com/astral-sh/ruff-pre-commit

rev: v0.3.0

hooks:

- id: ruff

args: [--fix]

- id: ruff-format

pre-commit install

Step 6: Verify the Setup

# src/calculator.py

import argparse

def add(a: float, b: float) -> float:

return a + b

def divide(a: float, b: float) -> float:

if b == 0:

raise ValueError("Cannot divide by zero")

return a / b

def main() -> None:

parser = argparse.ArgumentParser(description="Simple calculator")

parser.add_argument("operation", choices=["add", "divide"], help="Operation to perform")

parser.add_argument("a", type=float, help="First number")

parser.add_argument("b", type=float, help="Second number")

args = parser.parse_args()

if args.operation == "add":

print(add(args.a, args.b))

elif args.operation == "divide":

print(divide(args.a, args.b))

if __name__ == "__main__":

main()

Run it from the command line:

python src/calculator.py add 3 5 # Output: 8.0

python src/calculator.py divide 10 2 # Output: 5.0

python src/calculator.py divide 1 0 # Raises: ValueError

# tests/test_calculator.py

import pytest

from src.calculator import add, divide

def test_add() -> None:

assert add(2, 3) == 5

assert add(-1, 1) == 0

def test_divide() -> None:

assert divide(10, 2) == 5.0

def test_divide_by_zero() -> None:

with pytest.raises(ValueError):

divide(1, 0)

pytest tests/ -v

Expected output:

tests/test_calculator.py::test_add PASSED

tests/test_calculator.py::test_divide PASSED

tests/test_calculator.py::test_divide_by_zero PASSED

3 passed in 0.12s

This environment — version control, dependency isolation, linting, type checking, pre-commit hooks, and a test framework — is the foundation on which every subsequent chapter builds.

Step 7: Make Your First Meaningful Commit

With a passing test suite, you are ready to make a proper commit. Good commit practice starts here.

Stage only the files you intend to commit:

git add src/calculator.py tests/test_calculator.py pyproject.toml .pre-commit-config.yaml requirements.txt

Check what is staged before committing:

git status

git diff --staged

Write a descriptive commit message. A good message has a short subject line (under 72 characters) and, when needed, a body explaining why — not just what:

git commit -m "Add calculator module with add and divide operations

- Implements add() and divide() with type hints

- divide() raises ValueError on division by zero

- CLI entry point via argparse

- Unit tests covering happy path and error cases"

View your commit history:

git log --oneline

Expected output:

a3f92c1 Add calculator module with add and divide operations

e1b4d07 Initial commit: add .gitignore

Step 8: Understand What Not to Commit

Some files should never be committed. Your .gitignore already covers the most common cases, but it helps to understand why:

| File / Pattern | Why |

|---|---|

venv/ | Virtual environment — recreatable from requirements.txt |

__pycache__/, *.pyc | Python bytecode — generated automatically |

.env | API keys and secrets — never commit credentials |

*.egg-info/ | Package build artefacts |

.mypy_cache/, .ruff_cache/ | Tool caches — not part of the project |

Verify nothing sensitive is staged:

git status

git diff --staged --name-only

If you accidentally stage a secret, remove it before committing:

git restore --staged .env

Step 9: Activity — Extend and Commit

Complete the following activity to practise the full edit-test-commit cycle:

- Add a

multiply(a, b)function tosrc/calculator.pyand asubtract(a, b)function. - Add CLI support for both operations in

main(). - Write at least two tests for each new function in

tests/test_calculator.py. - Run the full check before committing:

ruff check src/ tests/

mypy src/

pytest tests/ -v

- Stage and commit your changes with a meaningful message:

git add src/calculator.py tests/test_calculator.py

git commit -m "Add multiply and subtract operations to calculator"

- Verify the commit appears in your log:

git log --oneline

A clean log with descriptive messages is part of professional software engineering practice — and it becomes especially important when collaborating with teammates or reviewing AI-generated changes.

Chapter 2: Requirements Engineering and Specification

“The hardest single part of building a software system is deciding precisely what to build.” — Fred Brooks, The Mythical Man-Month (1975)

Learning Objectives

By the end of this chapter, you will be able to:

- Explain the purpose and phases of requirements engineering.

- Apply multiple elicitation techniques to gather requirements from stakeholders.

- Distinguish between functional and non-functional requirements and write both clearly.

- Define epics, user stories, and acceptance criteria, and construct each for a realistic system.

- Write a Definition of Done for a software team.

- Use AI tools to assist with requirements generation and critique — and identify where AI assistance breaks down.

2.1 What Is Requirements Engineering?

Requirements engineering (RE) is the process of defining, documenting, and maintaining the requirements for a software system. It sits at the beginning of every software project, and its quality has an outsized effect on everything that follows: design decisions, implementation choices, testing strategies, and ultimately whether the system delivers value to its users.

The cost of fixing a requirements defect grows dramatically as development progresses. Research by Boehm, B. W., & Papaccio, P. N. (1988) found that defects discovered during requirements cost roughly 1–2 units to fix; the same defect discovered during testing costs 10–100 units; discovered in production, it can cost 100–1000 units. Getting requirements right early is one of the highest-return investments in software engineering.

Requirements engineering comprises four main activities:

- Elicitation: Discovering what stakeholders need

- Analysis: Resolving conflicts, prioritising, and checking feasibility

- Specification: Documenting requirements in a clear, agreed form

- Validation: Confirming that documented requirements reflect actual stakeholder needs

These activities are not strictly sequential. In practice, they iterate: elicitation reveals conflicts that require analysis; analysis raises new questions that require further elicitation; validation reveals gaps that require re-specification.

2.2 Eliciting Requirements

Elicitation is the most people-intensive phase of requirements engineering. Requirements do not simply exist waiting to be discovered — they must be actively constructed through dialogue between engineers and stakeholders.

Stakeholders include anyone with a stake in the system:

- Users: People who interact with the system directly

- Clients / customers: People or organisations paying for or commissioning the system

- Domain experts: People with specialist knowledge the system must encode

- Regulators: Bodies whose rules constrain the system

- Developers and operators: People who build and run the system

2.2.1 Interviews

One-on-one or small group interviews are the most common elicitation technique. They allow engineers to explore individual stakeholders’ perspectives in depth, ask follow-up questions, and observe non-verbal cues.

Structured interviews use a fixed set of questions, making responses comparable across stakeholders. Semi-structured interviews use a prepared guide but allow the interviewer to follow interesting threads. Unstructured interviews are open-ended conversations — useful early in a project when the problem space is poorly understood.

Effective interview questions:

- “Walk me through a typical day in your role. Where does [the system] fit in?”

- “What is the most frustrating part of the current process?”

- “What would success look like for you, six months after this system goes live?”

- “What happens when [edge case]? How do you handle that today?”

2.2.2 Workshops

Requirements workshops bring multiple stakeholders together in a structured session facilitated by a trained requirements engineer. They are particularly effective for resolving conflicts between stakeholder groups and building shared understanding quickly.

Joint Application Development (JAD) sessions (Wood & Silver, 1995) are a formalised workshop technique in which developers and users jointly define system requirements over 1–5 days. The intensity accelerates decision-making and builds stakeholder buy-in.

2.2.3 Observation and Ethnography

Sometimes the best way to understand requirements is to watch people do their work. Contextual inquiry (Beyer & Holtzblatt, 1998) involves working alongside users in their natural environment, observing what they actually do rather than what they say they do. This often surfaces tacit knowledge — practices and workarounds that users perform automatically and would never think to mention in an interview.

2.2.4 Document Analysis

Existing documents — process manuals, legacy system specifications, regulatory guidelines, error logs, support tickets — are a rich source of requirements for systems that replace or augment existing functionality. Analysing support tickets reveals the most common failure modes of a current system; regulatory guidelines reveal mandatory constraints.

2.2.5 Prototyping

Showing stakeholders a low-fidelity prototype (wireframes, paper mockups, a clickable UI mockup) is often more effective than describing a system in words. Prototypes make abstract requirements concrete and frequently reveal misunderstandings that would otherwise persist until late in development.

2.3 Functional and Non-Functional Requirements

All requirements can be classified as either functional or non-functional.

2.3.1 Functional Requirements

Functional requirements describe what the system must do — specific behaviours, functions, or features. They define the interactions between the system and its environment.

Format: Functional requirements are often written as:

The system shall [action] [object] [condition/qualifier].

Examples for a task management system:

- The system shall allow authenticated users to create tasks with a title, description, due date, and priority level.

- The system shall allow project managers to assign tasks to one or more team members.

- The system shall send an email notification to an assignee within 5 minutes of being assigned a task.

- The system shall allow users to filter tasks by status (open, in progress, completed, cancelled).

2.3.2 Non-Functional Requirements

Non-functional requirements (NFRs) describe how the system must behave — quality attributes that constrain the system’s operation. They are sometimes called quality attributes or system properties.

NFRs are often harder to specify precisely than functional requirements, but they are equally important. A system that does the right thing slowly, insecurely, or unreliably fails its users just as surely as one that does the wrong thing.

Key categories of non-functional requirements (ISO/IEC 25002:2024):

| Category | Description | Example |

|---|---|---|

| Performance | Speed and throughput | The API shall respond to 95% of requests within 200ms under a load of 1,000 concurrent users. |

| Reliability | Uptime and fault tolerance | The system shall achieve 99.9% uptime (≤8.7 hours downtime per year). |

| Security | Protection from threats | All data at rest shall be encrypted using AES-256. |

| Scalability | Ability to handle growth | The system shall support up to 100,000 active users without architectural changes. |

| Usability | Ease of use | A new user shall be able to create their first task within 3 minutes of registering. |

| Maintainability | Ease of change | All modules shall have unit test coverage of at least 80%. |

| Portability | Ability to run in different environments | The system shall run on any Linux environment with Python 3.11+. |

| Compliance | Adherence to regulations | The system shall comply with GDPR requirements for personal data storage and processing. |

The danger of vague NFRs: Non-functional requirements must be measurable to be useful. “The system should be fast” is not a requirement — it is a wish. “The API shall respond to 95% of requests within 200ms under a load of 1,000 concurrent users” is testable.

2.3.3 The FURPS+ Model

The FURPS+ model (Grady, 1992) provides a checklist for ensuring requirements coverage:

- Functionality: Features and capabilities

- Usability: User interface and user experience

- Reliability: Availability, fault tolerance, recoverability

- Performance: Speed, throughput, capacity

- Supportability: Testability, maintainability, portability

- +: Constraints (design, implementation, interface, physical)

2.4 Quality Attributes of Good Requirements

Individual requirements should satisfy the following quality criteria. The IEEE 830 standard (IEEE, 1998) and its successor ISO/IEC/IEEE 29148 (2018) are the canonical references.

| Attribute | Description | Bad Example | Good Example |

|---|---|---|---|

| Correct | Accurately represents stakeholder needs | — | Validated with stakeholders |

| Unambiguous | Has only one possible interpretation | “The system shall be user-friendly” | “A new user shall create their first task in under 3 minutes” |

| Complete | Covers all necessary conditions | “Users can log in” | “Users can log in with email/password; failed attempts are logged; accounts lock after 5 failures” |

| Consistent | Does not conflict with other requirements | Two requirements with contradictory session expiry rules | All session management requirements align |

| Verifiable | Can be tested or inspected | “The system shall be reliable” | “The system shall achieve 99.9% uptime” |

| Traceable | Can be linked to its source | Requirement with no stakeholder owner | Requirement tagged to specific stakeholder interview |

| Prioritised | Ranked by importance | No priority information | MoSCoW category assigned |

2.5 Epics, User Stories, and Work Items

In Agile teams, requirements are typically captured as a hierarchy of work items:

Epic

└── Feature / Capability

└── User Story

└── Task (implementation subtask)

2.5.1 Epics

An epic is a large body of work that can be broken down into smaller stories. Epics represent significant chunks of functionality — typically too large to complete in a single sprint.

Example epics for a task management system:

- User Authentication and Authorisation

- Task Lifecycle Management (create, assign, update, complete)

- Notifications and Alerts

- Reporting and Analytics

2.5.2 User Stories

Each epic decomposes into user stories — small, independently deliverable increments of value.

Epic: Task Lifecycle Management

| ID | User Story |

|---|---|

| US-01 | As a user, I want to create a task with a title and description so that I can record work that needs to be done. |

| US-02 | As a user, I want to assign a due date to a task so that I can track deadlines. |

| US-03 | As a project manager, I want to assign a task to a team member so that responsibilities are clear. |

| US-04 | As a user, I want to mark a task as complete so that the team can see progress. |

| US-05 | As a user, I want to add comments to a task so that I can communicate context without leaving the tool. |

2.5.3 Story Points

Story points are a unit of measure for estimating the relative effort or complexity of user stories. They are intentionally abstract — they do not map directly to hours or days — encouraging teams to think about relative complexity rather than precise time estimates.

Teams typically use a modified Fibonacci sequence: 1, 2, 3, 5, 8, 13, 21. The increasing gaps reflect growing uncertainty in estimating large, complex work.

Planning Poker is a common estimation technique (Grenning, 2002): each team member privately selects a card with their estimate; all cards are revealed simultaneously; significant discrepancies prompt discussion until the team reaches consensus.

Story points enable velocity tracking — the total points completed per sprint gives the team’s velocity, which predicts future throughput and informs release planning.

2.5.4 Tasks

Each user story is implemented through one or more tasks — specific technical actions. Tasks are not user-visible; they are engineering sub-steps.

Example tasks for US-03 (assign a task to a team member):

- Design the

POST /tasks/{id}/assignAPI endpoint - Implement the assignment logic and database update

- Write unit tests for the assignment service

- Write integration tests for the assignment endpoint

- Update API documentation

2.6 Prioritisation: The MoSCoW Framework

Once user stories are written, the team must decide which to build first. The MoSCoW framework (Clegg & Barker, 1994) provides a shared vocabulary for this:

| Category | Meaning | Guideline |

|---|---|---|

| Must Have | Non-negotiable; the system cannot launch without these | ~60% of effort |

| Should Have | Important but not vital; workarounds exist if omitted | ~20% of effort |

| Could Have | Nice to have; included only if time permits | ~20% of effort |

| Won’t Have | Explicitly excluded from this release | Documented, not built |

The “Won’t Have” category is often the most valuable: it makes explicit what is being deliberately deferred, turning unspoken assumptions into shared agreements.

Example — a task management application:

| Feature | MoSCoW |

|---|---|

| Create, read, update, delete tasks | Must Have |

| Assign tasks to team members | Must Have |

| Email notifications on task assignment | Should Have |

| Drag-and-drop task reordering | Could Have |

| Integration with Slack | Won’t Have (this release) |

2.7 Scope Creep

Even with user stories and prioritisation in place, projects face a persistent risk: scope creep — the gradual, uncontrolled expansion of scope beyond its original boundaries. It is one of the most common causes of project failure (PMI, 2021).

Scope creep happens when:

- Stakeholders request new features after the project has started

- Requirements are poorly defined, leaving room for interpretation

- The team adds features without formal approval

- External factors force new work mid-project

MoSCoW directly addresses this: by explicitly documenting what is Won’t Have, teams create a shared boundary that makes adding new scope a visible, deliberate decision rather than a gradual drift. Combined with regular backlog grooming and formal change control, user stories, prioritisation, and scope discipline together form the core of agile requirements management.

2.8 Acceptance Criteria

Acceptance criteria define the specific conditions that must be satisfied for a user story to be considered done. They bridge requirements and testing: each acceptance criterion should be directly testable.

The most common format is Gherkin — a structured natural language syntax used by the Cucumber testing framework (Wynne & Hellesøy, 2012):

Given [some initial context]

When [an action occurs]

Then [an observable outcome]

Example — US-03: Assign a task to a team member

Scenario: Successfully assigning a task

Given I am logged in as a project manager

And a task with ID "123" exists in my project

And a team member "alice@example.com" exists in my project

When I send POST /tasks/123/assign with body {"assignee": "alice@example.com"}

Then the response status code is 200

And the task's assignee field is updated to "alice@example.com"

And alice receives an email notification within 5 minutes

Scenario: Attempting to assign to a non-member

Given I am logged in as a project manager

And a task with ID "123" exists in my project

When I send POST /tasks/123/assign with body {"assignee": "nonmember@example.com"}

Then the response status code is 400

And the response body contains {"error": "User is not a member of this project"}

Scenario: Attempting to assign without permission

Given I am logged in as a regular user (not a project manager)

When I send POST /tasks/123/assign with body {"assignee": "alice@example.com"}

Then the response status code is 403

And the response body contains {"error": "Insufficient permissions"}

Well-written acceptance criteria cover:

- The happy path (the successful scenario)

- Error cases (invalid input, unauthorised access)

- Edge cases (boundary conditions, concurrent operations)

2.9 Definition of Done

The Definition of Done (DoD) is a shared agreement about what “complete” means for any piece of work. It is a quality gate: a story is not done until it satisfies every item on the DoD checklist (Schwaber & Sutherland, 2020).

Example Definition of Done for the course project:

- All acceptance criteria pass

- Unit tests written and passing (minimum 80% coverage for new code)

- Integration tests written and passing

- Code reviewed by at least one other team member

- Linter and type checker pass with no errors

- API documentation updated (if applicable)

- No new security vulnerabilities introduced (verified by automated scan)

- Deployed to the staging environment and manually tested

A DoD prevents “almost done” from becoming a permanent state and makes quality expectations explicit and consistent across the team.

Chapter 3: Software Design, Architecture, and Patterns

“A designer knows he has achieved perfection not when there is nothing left to add, but when there is nothing left to take away.” — Antoine de Saint-Exupéry

Learning Objectives

By the end of this chapter, you will be able to:

- Read and produce UML diagrams: use case, class, sequence, and component diagrams.

- Compare and select appropriate architectural patterns for a given system.

- Identify and apply common Gang of Four design patterns.

- Apply SOLID principles and other design guidelines to produce maintainable code.

- Write clean, readable Python code following established conventions.

- Use AI tools to assist with design and scaffolding — and critically evaluate what they produce.

3.1 Why Design Matters

Writing code that works is necessary but not sufficient. Code must also be maintainable — readable and modifiable by other developers (and by your future self) over months and years. Poor design decisions made early in a project compound over time: a monolithic module that is difficult to test becomes more difficult to test as it grows; a tangled dependency structure becomes harder to untangle as more code depends on it.

Software design is the activity of deciding how a system will be structured before (or alongside) the activity of writing code. Good design:

- Makes the system easier to understand

- Makes the system easier to test

- Makes the system easier to change in response to new requirements

- Reduces the risk of introducing bugs when modifying existing functionality

This chapter covers design at three levels:

- Diagrams: visual representations of system structure and behaviour

- Architecture: high-level decisions about system organisation

- Patterns: proven solutions to recurring design problems

3.2 UML Diagrams

The Unified Modeling Language (UML) is a standardised notation for visualising software systems (OMG, 2017). It provides a shared vocabulary for communicating design decisions between developers, architects, and stakeholders.

We focus on four diagram types that are most commonly used in practice.

3.2.1 Use Case Diagrams

Use case diagrams show the interactions between actors (users or external systems) and the use cases (features) a system provides. They communicate system scope at a high level and are useful for stakeholder communication early in a project.

Elements:

- Actor: A stick figure representing a user role or external system

- Use case: An oval representing a system function

- Association: A line connecting an actor to the use cases they participate in

- System boundary: A rectangle enclosing all use cases in scope

Example — Task Management System:

┌─────────────────────────────────────────────────────┐

│ Task Management System │

│ │

│ (Create Task) (Assign Task) (Close Task) │

│ │

│ (View Dashboard) (Generate Report) │

│ │

│ (Receive Notification) │

└─────────────────────────────────────────────────────┘

│ │ │

User Manager Email Service

Use case diagrams intentionally omit implementation detail — they show what the system does, not how.

3.2.2 Class Diagrams

Class diagrams show the static structure of a system — the classes, their attributes and methods, and the relationships between them. They are the most widely used UML diagram type for communicating object-oriented design.

Key relationships:

- Association: A uses B (solid line)

- Aggregation: A has B, B can exist without A (hollow diamond)

- Composition: A contains B, B cannot exist without A (filled diamond)

- Inheritance: A is a B (hollow triangle arrow)

- Interface implementation: A implements B (dashed line with hollow triangle)

- Dependency: A depends on B (dashed arrow)

Example — Task Management Domain Model:

┌────────────────┐ ┌────────────────┐

│ Project │1 * │ Task │

│────────────────│─────────│────────────────│

│ id: UUID │ │ id: UUID │

│ name: str │ │ title: str │

│ owner: User │ │ description:str│

│────────────────│ │ due_date: date │

│ add_task() │ │ priority: Enum │

│ get_tasks() │ │ status: Enum │

└────────────────┘ │────────────────│

│ assign(user) │

│ complete() │

└───────┬────────┘

│* assignees

┌───────┴────────┐

│ User │

│────────────────│

│ id: UUID │

│ email: str │

│ role: Enum │

└────────────────┘

3.2.3 Sequence Diagrams

Sequence diagrams show how objects interact over time to accomplish a specific use case. They are valuable for documenting the flow of a complex operation, particularly when multiple components or services are involved.

Example — Assigning a task:

Client API Gateway TaskService UserService NotificationService

│ │ │ │ │

│ POST /assign │ │ │ │

│──────────────>│ │ │ │

│ │ assign(id,email) │ │

│ │──────────────>│ │ │

│ │ │ getUser(email)│ │

│ │ │──────────────>│ │

│ │ │ user │ │

│ │ │<──────────────│ │

│ │ │ notify(user)│

│ │ │──────────────────────────────>│

│ │ │ email sent │

│ │ │<──────────────────────────────│

│ │ 200 OK │ │ │

│<──────────────│ │ │ │

3.2.4 Component Diagrams

Component diagrams show the high-level organisation of a system into components and their dependencies. They bridge the gap between architecture diagrams and class diagrams.

Example — Task Management API components:

┌──────────────────────────────────────────────────────────┐

│ Task Management API │

│ │

│ ┌─────────────┐ ┌──────────────┐ ┌─────────────┐ │

│ │ REST API │───>│ Service │──>│ Repository │ │

│ │ (FastAPI) │ │ Layer │ │ Layer │ │

│ └─────────────┘ └──────────────┘ └──────┬──────┘ │

│ │ │

│ ┌─────────────┐ ┌───────┴──────┐ │

│ │ Auth │ │ PostgreSQL │ │

│ │ (JWT) │ │ Database │ │

│ └─────────────┘ └─────────────┘ │

│ │

│ ┌─────────────────────────────────────────────────┐ │

│ │ Notification Service │ │

│ │ (Email via SendGrid) │ │

│ └─────────────────────────────────────────────────┘ │

└──────────────────────────────────────────────────────────┘

3.3 Architectural Patterns

An architectural pattern is a high-level strategy for organising the major components of a system. Selecting the right architectural pattern for a system’s requirements is one of the most consequential decisions a software team makes — and one of the hardest to reverse.

3.3.1 Layered (N-Tier) Architecture

The layered pattern organises a system into horizontal layers, where each layer serves the layer above it and depends only on the layer below it (Buschmann et al., 1996).

┌─────────────────────────────┐

│ Presentation Layer │ (HTTP endpoints, request/response)

├─────────────────────────────┤

│ Business Logic Layer │ (Services, domain logic, rules)

├─────────────────────────────┤

│ Data Access Layer │ (Repositories, ORM, queries)

├─────────────────────────────┤

│ Database Layer │ (PostgreSQL, Redis, etc.)

└─────────────────────────────┘

Strengths: Simple to understand; good separation of concerns; easy to test each layer independently.

Weaknesses: Can lead to “pass-through” layers that add no logic; performance overhead from passing data through many layers; tendency toward monolithic deployment.

Suitable for: Business applications, CRUD-heavy APIs, systems where the team is primarily familiar with this pattern.

3.3.2 Model-View-Controller (MVC)

MVC separates a system into three components (Reenskaug, 1979):

- Model: The data and business logic

- View: The presentation layer (what the user sees)

- Controller: Handles user input and coordinates Model and View

MVC is widely used in web frameworks: Django, Ruby on Rails, and Spring MVC all implement variants of this pattern.

3.3.3 Event-Driven Architecture

In an event-driven architecture, components communicate by producing and consuming events rather than calling each other directly. An event broker (such as Apache Kafka or RabbitMQ) decouples producers from consumers.

Producer ──> [Event Broker] ──> Consumer A

──> Consumer B

──> Consumer C

Strengths: High decoupling; components can scale independently; easy to add new consumers without modifying producers.

Weaknesses: Harder to reason about system state; distributed tracing is complex; eventual consistency requires careful handling.

Suitable for: High-throughput systems, microservices that need loose coupling, real-time notification systems, audit log pipelines.

3.3.4 Microservices

A microservices architecture decomposes a system into small, independently deployable services, each responsible for a single bounded domain (Newman, 2015). Each service has its own database and communicates with others via APIs or events.

Strengths: Services can be deployed, scaled, and rewritten independently; teams can work autonomously on separate services; fault isolation.

Weaknesses: Significant operational complexity (service discovery, distributed tracing, network latency, eventual consistency); not appropriate for small teams or early-stage products.

Suitable for: Large teams (multiple squads, each owning a service); systems where different components have very different scaling requirements.

3.3.5 Monolithic Architecture

A monolith is a single deployable unit containing all the system’s functionality. Despite its reputation, a well-structured monolith is often the right choice for small teams and early-stage systems (Fowler, 2015).

Strengths: Simple to develop, test, and deploy; no network latency between components; easy to refactor across the codebase.

Weaknesses: Entire system must be redeployed for any change; scaling requires scaling the entire application; risk of components becoming tightly coupled over time.

The “Monolith First” principle: Start with a well-structured monolith. Extract services only when you have clear evidence that a specific component needs independent scaling or when team boundaries demand it.

3.4 Design Patterns (Gang of Four)

Design patterns are proven, reusable solutions to commonly occurring problems in software design (Gamma et al., 1994). The original catalog, published by the “Gang of Four” (GoF), describes 23 patterns in three categories:

- Creational: How objects are created

- Structural: How objects are composed

- Behavioural: How objects interact and distribute responsibility

We cover the patterns most commonly encountered in Python backend development.

3.4.1 Singleton (Creational)

Ensures a class has only one instance and provides a global access point to it.

Use case: Database connection pools, configuration objects, logging instances.

# singleton.py

class DatabaseConnection:

_instance: "DatabaseConnection | None" = None

def __new__(cls) -> "DatabaseConnection":

if cls._instance is None:

cls._instance = super().__new__(cls)

cls._instance._connect()

return cls._instance

def _connect(self) -> None:

# Initialise the connection once

self.connection = "connected" # placeholder

def query(self, sql: str) -> list:

# Execute query using self.connection

return []

# Both variables point to the same instance

db1 = DatabaseConnection()

db2 = DatabaseConnection()

assert db1 is db2 # True

Caution: Singletons introduce global state, which can make testing difficult. In Python, dependency injection (passing the instance explicitly) is often preferable.

3.4.2 Factory Method (Creational)

Defines an interface for creating objects but lets subclasses decide which class to instantiate.

Use case: Creating notification objects (email, SMS, push) based on user preference.

# factory.py

from abc import ABC, abstractmethod

class Notification(ABC):

@abstractmethod

def send(self, message: str, recipient: str) -> None: ...

class EmailNotification(Notification):

def send(self, message: str, recipient: str) -> None:

print(f"Sending email to {recipient}: {message}")

class SMSNotification(Notification):

def send(self, message: str, recipient: str) -> None:

print(f"Sending SMS to {recipient}: {message}")

def create_notification(channel: str) -> Notification:

"""Factory function — returns the appropriate Notification subclass."""

channels: dict[str, type[Notification]] = {

"email": EmailNotification,

"sms": SMSNotification,

}

if channel not in channels:

raise ValueError(f"Unknown notification channel: {channel}")

return channels[channel]()

# Usage

notifier = create_notification("email")

notifier.send("Your task has been assigned.", "alice@example.com")

3.4.3 Observer (Behavioural)

Defines a one-to-many dependency between objects so that when one object changes state, all its dependents are notified automatically.

Use case: Event systems, UI data binding, notification pipelines.

# observer.py

from abc import ABC, abstractmethod

class EventListener(ABC):

@abstractmethod

def on_event(self, event: dict) -> None: ...

class TaskEventBus:

def __init__(self) -> None:

self._listeners: list[EventListener] = []

def subscribe(self, listener: EventListener) -> None:

self._listeners.append(listener)

def publish(self, event: dict) -> None:

for listener in self._listeners:

listener.on_event(event)

class EmailNotifier(EventListener):

def on_event(self, event: dict) -> None:

if event.get("type") == "task_assigned":

print(f"Email: task {event['task_id']} assigned to {event['assignee']}")

class AuditLogger(EventListener):

def on_event(self, event: dict) -> None:

print(f"Audit log: {event}")

# Usage

bus = TaskEventBus()

bus.subscribe(EmailNotifier())

bus.subscribe(AuditLogger())

bus.publish({"type": "task_assigned", "task_id": "123", "assignee": "alice"})

3.4.4 Strategy (Behavioural)

Defines a family of algorithms, encapsulates each one, and makes them interchangeable.

Use case: Sorting algorithms, payment processing, priority calculation.

# strategy.py

from abc import ABC, abstractmethod

from dataclasses import dataclass

from datetime import date

@dataclass

class Task:

id: str

title: str

due_date: date

priority: int # 1 (low) to 4 (critical)

class SortStrategy(ABC):

@abstractmethod

def sort(self, tasks: list[Task]) -> list[Task]: ...

class SortByDueDate(SortStrategy):

def sort(self, tasks: list[Task]) -> list[Task]:

return sorted(tasks, key=lambda t: t.due_date)

class SortByPriority(SortStrategy):

def sort(self, tasks: list[Task]) -> list[Task]:

return sorted(tasks, key=lambda t: t.priority, reverse=True)

class TaskList:

def __init__(self, strategy: SortStrategy) -> None:

self._strategy = strategy

def set_strategy(self, strategy: SortStrategy) -> None:

self._strategy = strategy

def get_sorted(self, tasks: list[Task]) -> list[Task]:

return self._strategy.sort(tasks)

3.4.5 Repository (Architectural Pattern)

While not in the original GoF catalog, the Repository pattern (Fowler, 2002) is essential in modern backend development. It abstracts the data access layer, presenting a collection-like interface to the domain model.

# repository.py

from abc import ABC, abstractmethod

from uuid import UUID

from dataclasses import dataclass

from datetime import date

@dataclass

class Task:

id: UUID

title: str

due_date: date | None = None

class TaskRepository(ABC):

"""Abstract repository — defines the interface."""

@abstractmethod

def find_by_id(self, task_id: UUID) -> Task | None: ...

@abstractmethod

def find_all_by_project(self, project_id: UUID) -> list[Task]: ...

@abstractmethod

def save(self, task: Task) -> Task: ...

@abstractmethod

def delete(self, task_id: UUID) -> None: ...

class InMemoryTaskRepository(TaskRepository):

"""In-memory implementation — used in tests."""

def __init__(self) -> None:

self._store: dict[UUID, Task] = {}

def find_by_id(self, task_id: UUID) -> Task | None:

return self._store.get(task_id)

def find_all_by_project(self, project_id: UUID) -> list[Task]:

return list(self._store.values()) # simplified

def save(self, task: Task) -> Task:

self._store[task.id] = task

return task

def delete(self, task_id: UUID) -> None:

self._store.pop(task_id, None)

The key benefit: services depend on the abstract TaskRepository, not on a specific database implementation. Swapping PostgreSQL for SQLite in tests requires only a different concrete class.

3.5 Design Principles

Design patterns tell you what to do in specific situations. Design principles tell you how to think about design in general. These principles have been distilled from decades of practical experience.

3.5.1 SOLID Principles

The SOLID principles (Martin, 2000) are five guidelines for writing maintainable object-oriented code:

S — Single Responsibility Principle (SRP)

A class should have only one reason to change.

A class that handles HTTP parsing, business logic, and database queries will need to change whenever any of those three concerns changes. Separating them into different classes means each has one reason to change.

# Violates SRP — this class does too much

class TaskService:

def create_task(self, title: str, user_id: str) -> dict:

# Business logic

if not title.strip():

raise ValueError("Title cannot be empty")

# Database access (should be in repository)

db.execute("INSERT INTO tasks ...")

# Email sending (should be in notification service)

smtp.send_email(user_id, "Task created")

return {"id": "...", "title": title}

O — Open/Closed Principle (OCP)

Software entities should be open for extension, but closed for modification.

You should be able to add new behaviour without modifying existing code. The Strategy pattern from Section 3.4.4 is a direct application of OCP: new sort strategies can be added without modifying TaskList.

L — Liskov Substitution Principle (LSP)

Objects of a subclass should be substitutable for objects of the superclass without altering program correctness.

If InMemoryTaskRepository is a subclass of TaskRepository, any code that works with TaskRepository must work identically with InMemoryTaskRepository. Violating LSP typically indicates that the inheritance relationship is wrong.

I — Interface Segregation Principle (ISP)

Clients should not be forced to depend on interfaces they do not use.

Rather than one large interface, prefer several small, focused ones. A ReadOnlyTaskRepository interface (with only find_by_id and find_all) is more appropriate for a reporting service than a full TaskRepository that includes save and delete.

D — Dependency Inversion Principle (DIP)

High-level modules should not depend on low-level modules. Both should depend on abstractions.

# Violates DIP — TaskService depends directly on the concrete PostgreSQL implementation

class TaskService:

def __init__(self) -> None:

self.repo = PostgresTaskRepository() # concrete dependency

# Follows DIP — TaskService depends on the abstract interface

class TaskService:

def __init__(self, repo: TaskRepository) -> None:

self.repo = repo # injected abstraction

This is dependency injection — the concrete implementation is passed in from outside, typically by an application container. It makes TaskService testable with InMemoryTaskRepository.

3.5.2 DRY: Don’t Repeat Yourself

Every piece of knowledge must have a single, unambiguous, authoritative representation within a system. (Hunt & Thomas, 1999)

Duplicated code is duplicated knowledge. When the logic changes (and it will), you must find and update every copy. The solution is not always to extract a function — sometimes the duplication is accidental and the two pieces of code will diverge. Use judgment: extract when the duplication represents the same concept, not just the same syntax.

3.5.3 Composition Over Inheritance

Prefer composing objects from smaller, focused components over building deep inheritance hierarchies. Inheritance creates tight coupling between parent and child; composition allows components to be mixed and matched.

3.5.4 Hollywood Principle

“Don’t call us, we’ll call you.”

High-level components should control when and how low-level components are used, not the reverse. This is the principle behind inversion of control (IoC) frameworks and the Observer pattern.

3.6 Clean Code

Clean code is code that is easy to read, understand, and modify (Martin, 2008). It is not about aesthetics — it is about reducing the cognitive load on the next developer who reads it (who is often you, six months later).

3.6.1 Naming

Names should reveal intent. Avoid abbreviations, single-letter variables (except in well-established contexts like loop counters), and misleading names.

# Poor naming

def proc(d: list, f: bool) -> list:

r = []

for i in d:

if i["s"] == 1 or f:

r.append(i)

return r

# Clean naming

def get_active_tasks(tasks: list[dict], include_archived: bool = False) -> list[dict]:

return [

task for task in tasks

if task["status"] == 1 or include_archived

]

3.6.2 Functions

Functions should do one thing and do it well. A function that can be described with “and” in its name (e.g., validate_and_save_task) is doing too much. Keep functions short — typically 5–20 lines. If a function is longer, it is probably doing more than one thing.

3.6.3 Comments

Write code that does not need comments. When a comment is necessary, explain why, not what — the code already shows what it does.

# Poor comment — explains what the code does, which is obvious

# Loop through tasks and add them to the result list

result = [task for task in tasks if task.is_active()]

# Good comment — explains a non-obvious constraint

# Skip soft-deleted tasks: the UI shows these with a strikethrough

# but the API should not return them in list endpoints

result = [task for task in tasks if not task.deleted_at]

3.6.4 Code Structure and Style

Consistent structure and formatting reduce cognitive load. For Python, follow PEP 8 — the official style guide — and use ruff (introduced in Chapter 1) to enforce it automatically.

Key conventions:

- 4-space indentation

- Maximum line length: 88–120 characters (team decision)

- Two blank lines between top-level definitions

- Type annotations on all function signatures (enforced by

mypy)

3.7 AI-Assisted Design

AI tools can accelerate the design phase in several ways, but each requires critical evaluation.

3.7.1 Generating Architecture Diagrams from Specifications

Given a requirements document, an LLM can suggest an initial architecture:

import anthropic

client = anthropic.Anthropic()

requirements = """

System: Task Management API

- Multi-tenant SaaS for software teams (10–500 users per tenant)

- REST API backend; no frontend in scope

- Tasks can be created, assigned, updated, and completed

- Email notifications on assignment

- Must support 1,000 concurrent users, 200ms p95 response time

- Data must be isolated per tenant

"""

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

messages=[

{

"role": "user",

"content": f"""You are a software architect.

Based on the following requirements, suggest an appropriate

architectural pattern and explain your reasoning. Identify

the key components and their responsibilities.

Flag any requirements that represent significant architectural risk.

Requirements:

{requirements}""",

}

],

)

print(response.content[0].text)

The output is a starting point for discussion, not a final decision. Treat it as a first draft from a knowledgeable junior architect who has not seen your organisation’s constraints.

3.7.2 Generating Code Scaffolds

AI excels at generating boilerplate code from a class diagram or interface definition:

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=2048,

messages=[

{

"role": "user",

"content": """Generate a Python implementation of a TaskRepository

using the Repository pattern. The concrete implementation should use

a plain dictionary as an in-memory store (for testing).

Use Python 3.11 type hints throughout. Include docstrings only where

the behaviour is non-obvious. Follow PEP 8.""",

}

],

)

Always review AI-generated scaffolds for:

- Correct use of type hints

- Adherence to the interface contract

- Missing edge cases (null handling, empty collections)

- Security issues (SQL injection if a DB implementation is generated)

3.8 Tutorial: AI-Assisted System Design

This tutorial walks through using AI to assist with the design of the course project API, then critically reviewing the output.

Step 1: Generate a Component Design

# design_assistant.py

import anthropic

client = anthropic.Anthropic()

def generate_component_design(requirements: str) -> str:

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=2048,

messages=[

{

"role": "user",

"content": f"""You are a software architect designing a Python

REST API. Based on the requirements below, produce:

1. A list of the main components (services, repositories, models)

2. The key interface (method signatures) for each component

3. A brief rationale for any significant design decision

Use Python 3.11 type hints in all interface definitions.

Do not generate implementation code — interfaces only.

Requirements:

{requirements}""",

}

],

)

return response.content[0].text

requirements = """

Task Management API:

- Users can create, read, update, and delete tasks

- Tasks belong to projects; projects belong to organisations

- Tasks have: title, description, due_date, priority, status, assignee

- Project managers can assign tasks; regular users can only update their own

- Email notification sent when a task is assigned

- All endpoints require JWT authentication

"""

design = generate_component_design(requirements)

print(design)

Step 2: Critically Review the Output

When reviewing AI-generated design, ask:

- Does each component have a single responsibility? If a service is described as doing X, Y, and Z, it needs to be split.

- Are dependencies pointing the right direction? High-level business logic should not depend on low-level infrastructure.

- Is the interface testable? Can you write a test without a real database or email server?

- Are edge cases represented? What happens when a task is assigned to a user who has left the project?

- Is the interface consistent? Do all repository methods follow the same conventions?

Document your review findings and revise the design before implementing.

Chapter 4: Testing, Quality, and CI/CD

“Testing shows the presence, not the absence of bugs.” — Edsger W. Dijkstra

Learning Objectives

By the end of this chapter, you will be able to:

- Explain the different levels of software testing and when to apply each.

- Write unit tests and integration tests in Python using pytest.

- Measure and interpret code coverage and understand its limitations.

- Configure a CI/CD pipeline using GitHub Actions.

- Apply static analysis and code review techniques to catch defects early.

- Critically evaluate AI-generated tests and understand why AI cannot replace a thoughtful testing strategy.

4.1 Why Testing Matters

Software testing is the process of executing software with the intent of finding defects. It is not an optional step at the end of development — it is a discipline that runs throughout the entire software development lifecycle.

Testing serves several purposes:

- Defect detection: Finding bugs before they reach users

- Regression prevention: Ensuring that new changes do not break existing functionality

- Design feedback: Tests that are hard to write often indicate design problems

- Documentation: A well-named test suite describes exactly what a system does

- Confidence: A passing test suite gives the team confidence to make changes

The question is not whether to test, but how to test effectively given limited time and resources.

4.2 The Testing Pyramid

The testing pyramid (Cohn, 2009) describes the ideal distribution of test types:

┌───────────┐

│ E2E / │ Few, slow, fragile — test critical paths only

│ UI Tests │

┌┴───────────┴┐

│ Integration │ Some — test component interactions

│ Tests │

┌┴──────────────┴┐

│ Unit Tests │ Many — fast, isolated, precise

└────────────────┘

Unit tests are the foundation: fast, isolated, numerous. They test individual functions or classes in isolation.

Integration tests verify that components work correctly together — services calling repositories, API handlers interacting with business logic.

End-to-end (E2E) tests exercise the system as a whole, simulating real user interactions. They are slow, brittle, and expensive to maintain — use them sparingly, for critical user journeys only.

This distribution is sometimes called the “1:10:100 rule” — for every E2E test, write ~10 integration tests and ~100 unit tests. The exact ratio varies by system, but the principle holds: favour fast, isolated tests over slow, coupled ones.

4.3 Black-Box and White-Box Testing

Testing approaches can be categorised by how much knowledge of the internal implementation the tester uses.

4.3.1 Black-Box Testing

In black-box testing, the tester has no knowledge of the internal implementation. Tests are derived entirely from the specification — inputs are provided and outputs are verified against expected behaviour.